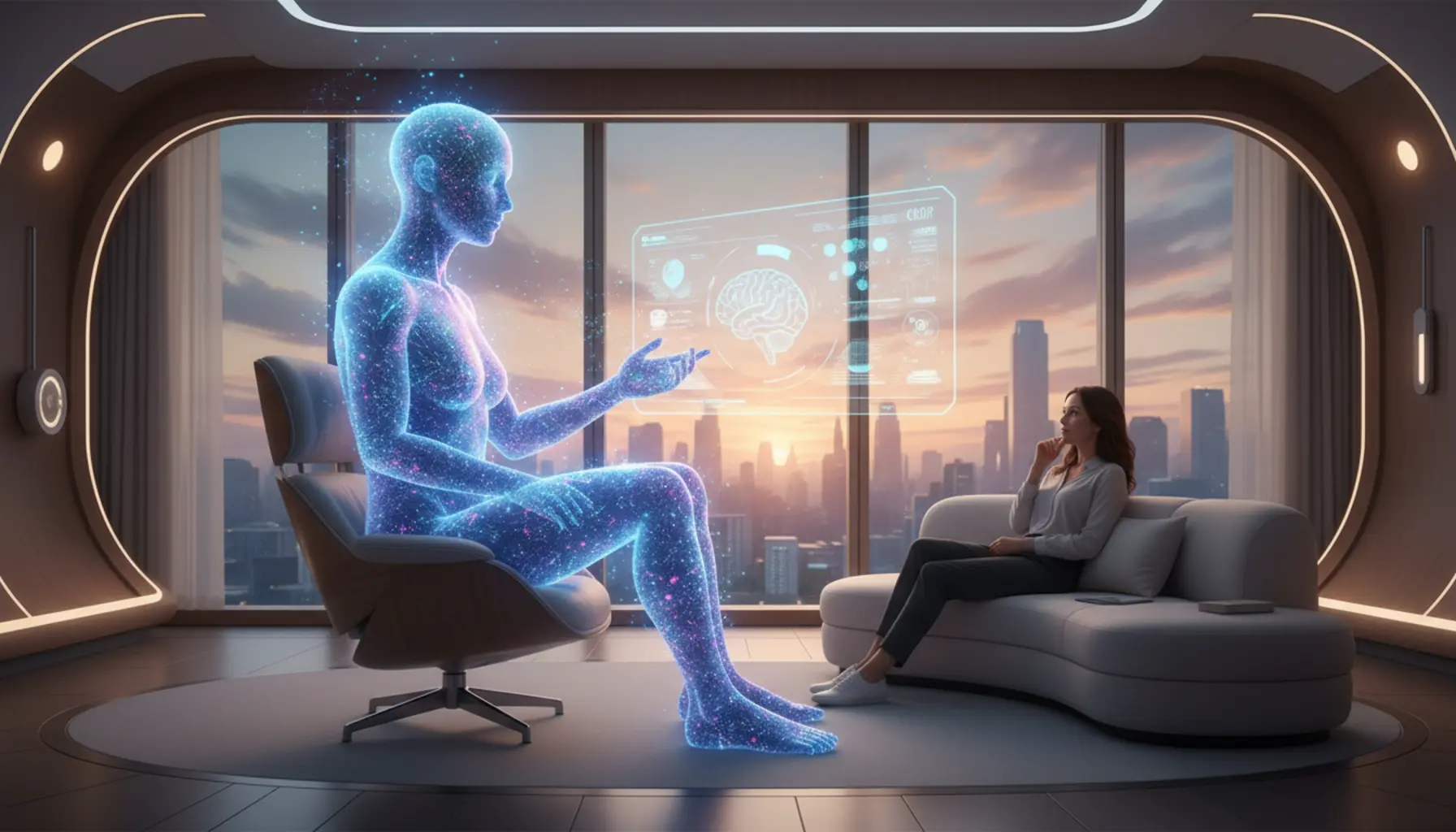

Artificial intelligence is increasingly permeating the world of psychotherapy, offering support and innovative tools for both therapists and patients. Find out how AI may affect the future of professional psychological help and what challenges and opportunities its dynamic development brings.

Table of Contents

- Artificial Intelligence in Psychotherapy

- Can AI Understand the Complexities of Human Nature?

- The Role of AI as a Tool Supporting Therapists

- Troubling Aspects of Replacing Therapists with AI

- Technological Innovations in Psychotherapy

- The Future of AI and Classical Therapy

Artificial Intelligence in Psychotherapy

Artificial intelligence is entering the world of psychotherapy at many levels – from simple chatbots offering emotional support and mood-monitoring apps to advanced analytical systems supporting diagnosis and clinical decisions. In practice, this means that AI can accompany the patient between sessions, help with sustaining motivation for self-work, and relieve therapists from some routine tasks, such as questionnaire analysis, symptom journaling, or reminders about therapeutic techniques. The key is that current AI-based solutions are not designed as therapist replacements, but as a “support layer” meant to enhance the effects of classical therapy. For example, applications based on the principles of cognitive behavioral therapy (CBT) can suggest automatic exercises for thought restructuring, exposure, or mindfulness, based on user-entered data – such as situation descriptions, emotional intensity, or anxiety levels. Learning algorithms detect recurring patterns (e.g., symptom intensification in the evenings or on certain days of the week) and may suggest specific coping strategies to the patient or recommend contacting a therapist when a sudden mood deterioration is noticed. For many, the emergence of AI in mental health signifies a chance to increase accessibility to support – especially where there is a shortage of specialists, long waiting lists, or barriers such as the stigma of going to an office or financial constraints. In this context, AI acts as the “first line of contact”: it can gather initial data, conduct a standardized screening interview, assess suicide risk based on the patient’s answers, and propose next steps such as contacting a doctor, psychotherapist, or a crisis hotline. As a result, the qualification process for therapy or psychiatric consultation can be faster and more organized, allowing specialists to focus on patients with the greatest need for intensive support. Artificial intelligence is also integrated into teletherapy – for instance, as tools that analyze speech, facial expressions, or speech tempo during online sessions. Such systems can pick up on subtle changes in voice tone, the frequency of pauses, or even vocabulary choice, which may gradually signal mood shifts, rising tension, or emerging resignation thoughts. Numerous startups and research teams are working on using this data as “extra eyes and ears” for the therapist, analyzing the therapy process in the background. Such tools can help assess which interventions (homework, emotion regulation techniques, belief work) are associated with improvement and which aren’t delivering expected results. At a population level, AI can also analyze large, anonymized clinical data sets, enabling a better understanding of disorder progression, relapse risk factors, or the efficacy of various therapeutic protocols in specific groups (e.g., teenagers with depression, trauma survivors, or patients with anxiety disorders).

An important area of AI implementation in psychotherapy is treatment process personalization. Traditionally, a therapist selects the right approach, pace, and set of techniques based on their experience, theoretical knowledge, and client interview. AI can support this decision by analyzing thousands of similar cases – considering symptom types, treatment history, comorbid problems (like addictions or somatic illnesses), personality traits, and patient preferences. These systems can indicate which therapy forms (e.g., CBT, schema therapy, EMDR) and session intensity worked best for patients with similar profiles. The idea is not for AI to decide for the therapist, but to provide a “probability map” to facilitate informed clinical decision-making. At the same time, self-service tools for patients are also developing, such as smart mood diaries, which do more than just log moods; they also suggest explanations for changes – e.g., linking mood drops to sleep deprivation, lack of physical activity, or certain social events. Advanced language models can generate empathetic replies, paraphrase the user’s words, help name emotions, and reflect on their significance in a way reminiscent of basic therapeutic relationship factors such as validation, normalization, or psychoeducation. However, it must be emphasized that even if AI can “simulate” empathy and use supportive language, it still does not experience emotions or bear ethical responsibility for its “reactions.” For this reason, professional systems for mental health applications are designed with numerous safeguards: clear messages stating they don’t replace therapy, content-detection algorithms picking up on life-threatening indications, and procedures for directing users to real-world help when necessary. Another important issue is privacy and data safety – mental health data is exceptionally sensitive, and its processing by AI systems must comply with regulations (GDPR, HIPAA in the US) and uphold high anonymization standards. Ethical reflection is also developing: researchers, therapists, and lawyers debate which decisions can be left to machines, which must remain strictly human, how to minimize algorithmic bias risks (e.g., poorer risk estimates for minorities), how to ensure model transparency, and who is liable for errors. As a result, artificial intelligence in psychotherapy today is shaping up as a complex ecosystem of solutions – from simple self-help tools to advanced, clinically supervised decision-support systems – with humans (both patients and qualified psychotherapists) still at the center.

Can AI Understand the Complexities of Human Nature?

Artificial intelligence can process and analyze vast amounts of data faster than any human, but asking if it really “understands” people gets to the heart of its use in psychotherapy. Current AI systems – including tools based on language models – lack consciousness, self-awareness, or personal experience. They operate on statistical patterns: predicting which words, responses, or recommendations are most likely in a given context, based on what the model “saw” in the training data. In practice, this can create a strong impression of understanding, as AI is able to accurately name emotions, reference psychological theories, and even suggest reasonable coping strategies. However, this is an imitation of understanding, not true human understanding rooted in personal experiences of suffering, joy, relationships, loss, or the sense of meaning. The complexities of human nature arise not only from what we say, but also from what we don’t say: our tone of voice, body language, cultural background, life history, and subtle, often conflicting internal motivations. Some of these dimensions may be partially captured in data (e.g., voice tone, words, frequency of contact), but many aspects – like a therapist’s intuition, “sense of timing”, or ability to empathize with a patient’s pain – cannot be easily reduced to an algorithm. Research on AI in mental health shows that models can classify mood from text or voice recordings and even predict depressive symptom severity, but their effectiveness depends on data quality, and whether the individual’s context is similar to that of the training set. This means that AI can “understand” some patterns well – e.g., descriptions of depressive episodes typical for a certain culture – but can fail with minorities, other cultures, dialects, or those whose experiences and ways of describing the world deviate from statistical norms. From a psychotherapy perspective, this is crucial, because “atypical” stories often require the greatest attention, flexibility, and therapist sensitivity. Another issue is the complexity of human motivation: a patient might unconsciously sabotage therapy, test boundaries, or enact past relationships with parents or partners in the therapist relationship. An experienced therapist can recognize this, sense tension in the therapeutic relationship, and address it during the process. An AI system can detect contradictions in statements, but it won’t ever “feel” the relational tension, since it lacks emotions or a personal history as a reference point. Its “empathy” is simulated – it reproduces language patterns typical of empathetic answers. A patient may subjectively feel heard, but this effect depends on the algorithm’s skill in generating the right language, not genuine empathy. This raises ethical questions: is informing the patient that they’re chatting with AI enough if the tool’s style is designed to imitate a caring human as closely as possible? And does the lack of real feelings on the “interlocutor’s” side limit the depth of achievable insight, especially in relational therapies like psychodynamic or humanistic approaches, where the authentic encounter between two people is key? Finally, human nature includes paradox and contradiction, and therapy often involves learning to live with uncertainty, a lack of simple answers, and acceptance of limits to one’s own control. AI, by definition, aims to reduce uncertainty – to generate the most probable and “consistent” answer. This can reinforce the expectation that every pain should have a quick, algorithmic solution, even though real healing processes are slow, nonlinear, and unpredictable. For these reasons, even the most advanced tools are likely to remain experts at recognizing and classifying patterns, rather than fully grasping the existential depth of human experience – which escapes purely statistical frameworks and is the very core of many psychotherapy modalities.

Although modern AI systems may seem surprisingly “human” in their manner of communication, their architecture and mode of operation radically differ from the human mind, limiting their capacity to comprehend patient experience complexity. The human mind is shaped over years within a dense network of relationships, cultures, values, traumas, and desires, with embodiment – experiencing the world through the body and senses – playing a key role. AI has no body, has never felt physical pain, doesn’t sleep, fall ill, fear death, or form emotional bonds with others, so all its “references” to such experiences are indirect, mediated by textual or visual data. In psychotherapy, on the other hand, it’s often about the therapist’s ability – emotionally and physically – to resonate with the patient’s narrative, e.g., feeling tension, fatigue, anger, or powerlessness, and then using that reaction (countertransference) as information for the process. AI can at most “guess” that, in a given situation, a caring or concerned response would be appropriate. Critically, current AI models are trained on historical data, meaning they also reproduce biases, stereotypes, and errors present therein. In psychotherapy, this can have sharp consequences: people from minority groups may get less adequate, more stereotyped answers; narratives about experiences rare in data sets (e.g., traumas specific to a community) may be less understood and less well supported. A well-calibrated system can partly mitigate these risks but won’t eliminate them, as AI lacks an autonomous moral compass or an inherent sense of justice – it is guided by what it reads in the data and its coded rules. Meanwhile, the complexities of human nature frequently involve moral dilemmas, value conflicts, guilt, and shame, or existential questions that require not just information but also wisdom, life experience, and reflection on good and evil. Equally important, people change through relationships: the therapist, hearing a client’s story, also undergoes an internal process, learns, revises beliefs, and sometimes experiences emotion or helplessness. This mutuality – though asymmetrical – builds authentic contact in therapy. AI remains static in this sense: its change is the effect of model updates by a technical team, not the result of personal experience. Even if the system “adjusts responses” based on user interactions, it doesn’t experience them; it only updates weight parameters. On the other hand, AI’s limited “emotionality” can, in some areas, prove advantageous – the algorithm does not judge, tire, or react impulsively to what the patient says, thus doesn’t transfer its unresolved issues onto the patient. For some people, this can be safer than talking to a human therapist. AI may also pick up patterns a person could miss – e.g., subtle but systematic wording changes signaling a deepening crisis, before the patient or therapist is aware. But this doesn’t mean AI “understands” human nature better; it simply analyzes some data fragments more efficiently. Ultimately, the difference between pattern analysis and deep, embodied experience of another remains fundamental; thus, AI can become a tool to capture some aspects of human complexity but is not an entity capable of fully, existentially understanding human life.

The Role of AI as a Tool Supporting Therapists

Artificial intelligence does not compete with the therapist for the “therapist’s chair,” but gradually becomes a digital assistant that helps therapists make better use of their time, energy, and competencies. In clinical practice, this mainly means automating administrative tasks, preliminary patient triage, and providing data that support therapeutic decisions. AI systems can analyze registration forms, online interviews, or brief screening questionnaires for symptom severity, suicide risk, comorbid disorders, and intervention urgency. This way, the therapist receives an organized picture of the patient’s situation even before the first meeting, shortening the time needed for preliminary diagnosis and speeding up referral to the most appropriate help – such as individual or group psychotherapy, psychiatric consultation, or crisis intervention. Some centers also experiment with algorithms that suggest priority on waiting lists, based on data about suffering severity, suicide attempt history, or lack of social support. AI can also support therapy monitoring: machine learning-based apps analyze patients’ self-report data (mood scales, diary notes, answers in short questionnaires) and passive data from mobile devices (such as changes in sleep rhythm, activity, or communication). Based on this, reports for the therapist are generated, highlighting subtle functional declines the patient may not notice or mention in session. Thus, the therapist can react sooner to deterioration, modify the treatment plan, or introduce crisis interventions before symptoms worsen. Importantly, such mechanisms do not “replace” clinical assessment – they act more as early warning systems that increase the chance for timely response. In treatment planning, AI is used as a tool supporting therapy method choice. By analyzing large anonymized data sets – for example, the therapy progress of thousands of patients with similar diagnoses, comorbidities, and personality traits – models can suggest which approaches (CBT, schema therapy, DBT, ACT, etc.) were statistically associated with better treatment response in similar cases. The therapist maintains full decision-making freedom but gains an additional, data-driven perspective that may help refine the individual therapy plan. Similarly, AI can support the supervision process: session analysis tools (text or voice, obtained with conscious consent and strict security) show how much time is spent asking open questions, reflecting feelings or psychoeducation, versus making personal interpretations. This can support self-assessment of work style, increased attention to the patient’s responses, and communication skill development. There are already prototypes of systems that catch potential micro-omissions, such as missed suicide signals, addiction changes, or domestic violence, and flag them for reconsideration by the therapist.

The second area where AI plays an important supportive role is accompanying the patient between sessions and relieving the therapist from tasks that don’t require direct contact. Therapeutic chatbots, mood-monitoring apps, or reminder systems help patients maintain continuity in the change process: encouraging homework, breathing exercises, exposure, or mindfulness practice. Machine learning algorithms analyze which exercises a given user uses most often, under which circumstances they abandon them, and which factors help sustain engagement. Based on this, the system can suggest more tailored interventions, e.g., shorter mindfulness sessions for those with focus difficulties, flexible reminders aligned to daily rhythm, or motivational messages based on past successes. For therapists, this means that some psychoeducation, repetitive instructions, or progress monitoring shifts to technology, while session time can be dedicated to deeper work on relationships, meaning, and emotions. Many clinical teams use AI for work organization as well: smart scheduling systems analyze office occupancy, typical session lengths, frequency of cancellations, and patient preferences to optimize timetables and shorten waiting times. For therapists in the public sector, burdened with high patient loads and paperwork, this is real support in reducing “bureaucratic burnout.” AI may also assist therapy teams in analyzing epidemiological trends – e.g., a sudden rise in anxiety referrals among youth, or burnout symptoms among healthcare workers – which helps plan preventive and intervention programs. Crucially, the role of AI must be clearly defined and transparent to both therapist and patient: the system should be presented as an assistive tool, not a “second therapist.” The patient must know which elements of their data are analyzed automatically, what conclusions are drawn, and who ultimately holds responsibility for clinical decisions. Ethically, maintaining patient agency is essential – AI cannot become an “invisible judge” whose suggestions are accepted without reflection. The most promising models are collaborative, in which the therapist uses technology to broaden their understanding of the patient, but maintains critical thinking, sensitivity to cultural context, and the person’s unique history. In this model, AI becomes a tool that organizes data, aids memory, increases access to help, and allows faster risk response, while the essence of the therapeutic process remains human presence, the relationship, and responsible clinical decision-making.

Troubling Aspects of Replacing Therapists with AI

Replacing therapists with AI would entail a range of problems going far beyond technical effectiveness. Most important is the absence of real relationship and responsibility – in therapy, the therapeutic bond itself is one of the strongest healing factors, not just techniques or protocols. The algorithm bears no moral responsibility for its “decisions,” nor has a personal history or identity a patient can resonate with. Yet, in work with trauma, loss, or shame, a person needs another person to authentically respond to their experience, not just generate correctly worded statements. Replacing the therapist with an AI system raises the question: who really accompanies the patient – a specialist who is responsible for their choices, or an impersonal statistical model, shaped by obscure business interests and design decisions? This lack of transparency can undermine trust, especially if the patient does not fully understand how the system makes certain “therapeutic recommendations.” In practice, this may lead to reducing complex mental problems to a few symptom categories and ready-made intervention schemes, which are scalable but poorly reflect the often chaotic individuality of a person’s reality. Another troubling issue is the automation of risk assessment decisions – e.g., suicide risk or violence. AI may be highly sensitive to certain language patterns or behaviors and alarm too often (causing “alarm fatigue” and downgrading warnings), or, conversely, miss subtle, culturally influenced signals absent from the training data. In borderline situations, where intuition, experience, and the ability to pick up on “something between the lines” matter, over-reliance on automatic classifications can result in tragic errors. There’s also the prospect of normalizing contact with a machine instead of a human in domains that demand closeness, co-presence, and emotional regulation through relationship. If patients – through economic or systemic reasons – are more often directed to chatbots than human therapists, it may reinforce the sense that their suffering isn’t “worth” human time and can be handled like a helpdesk ticket. For those with a history of rejection or neglect, such a message can be secondarily painful. Socially, the consequences are also significant: automating therapy can deepen inequalities if wealthier clients access human-run therapy and the less privileged receive cheap or free AI solutions. This effectively creates a two-speed therapeutic system where human contact becomes a privilege, not the standard of care.

It is especially problematic how AI design decisions can imperceptibly shape psychological norms. Models are trained on data – disorder descriptions, therapy protocols, session recordings – that always reflect particular paradigms, cultures, and biases. If such algorithms gain “objective expert” status, their built-in assumptions can define what is “healthy,” “normal,” or “dysfunctional,” marginalizing minority or non-normative experiences and lifestyles. Imagine a non-heteronormative person, raised in a homophobic environment, interacting with an AI model trained mostly on conservative population data – there is a high risk of subtle pathologizing of identity or ignoring social violence context. Furthermore, AI operates by definition on an optimization logic: it aims to minimize symptoms, shorten treatment time, and improve intervention “efficiency.” However, psychotherapy is often nonlinear, may require prolonged suffering, confrontation with difficult truths, or even a temporary “worsening” of functioning to achieve deeper change. Algorithmic efficiency emphasis may promote “quick fixes,” surface-level symptom reduction over addressing source issues. Added to all this is the fundamental issue of data privacy and security. Therapists are bound by professional confidentiality and clear ethical codes. AI systems, often developed by large tech entities, function in an ecosystem where data is a valuable business resource. Data leaks, misuse (e.g., for marketing or credit assessment), or government acquisition for surveillance are real risks, especially if systems are not designed with maximum data minimization in mind. A patient confiding in an algorithm may not fully understand where their data physically resides, how long it’s stored, who accesses it, or what analyses are performed. Replacing therapists with AI without strict and transparent legal and ethical frameworks would mean transferring life’s most sensitive aspects – thoughts, fears, fantasies – into an environment inherently oriented toward scaling, monetization, and standardization.

Technological Innovations in Psychotherapy

Rapid AI advances are broadening the spectrum of technological innovations in psychotherapy – from simple mood-tracking apps to complex predictive systems integrating multiple data sources. One of the most visible trends is therapeutic chatbots and conversational assistants, based on language models capable of natural conversation. Such tools – available 24/7 on a smartphone or computer – help users regulate emotions, practice CBT techniques, keep thought and mood journals, and easily implement session recommendations. Unlike traditional hotlines, AI systems are scalable, allowing many more people to be supported with the same personnel resources. At the same time, mobile apps integrated with digital biomarkers are more common, using phone and smartwatch sensors (physical activity, sleep, phone usage) to catch early signals of depression relapse, mania, or anxiety crisis. Algorithms analyze behavioral patterns, daily rhythm changes, and users’ written language to alert the therapist of potential patient deterioration and enable quicker intervention. Next-generation teletherapy platforms are also advancing, going beyond just video calls to combine calendars, secure document exchange, interactive exercises, automatic reminders, and AI modules helping patients reinforce skills between sessions. For example, the system may propose micro-interventions matched to current mood tracked in real time, or adapt the pace to the user’s engagement level. Clinics are also introducing tools for automatic transcription and analysis – software recognizes speech, turns it into text, and then analyzes the language used, speaking tempo, and emotional tone. This gives therapists statistical details about session course, dominant themes, and relationship dynamics, aiding supervision and style improvement. Advanced machine learning algorithms are used to build clinical decision support systems: analyzing big data from research, medical documentation, and therapy records helps identify response patterns to treatment and suggest intervention sets most likely to work for each patient profile, symptom history, and previous therapeutic experience.

On the horizon are also innovations expanding the classical “therapy office” concept. Using virtual (VR) or augmented reality (AR) opens new possibilities for working with anxiety disorders, phobias, or PTSD. Instead of just describing difficult situations verbally, the patient can, in a controlled digital environment, face anxiety-triggering stimuli with therapist support and constant psychophysiological monitoring. The system gradually increases exposure, and the software – often with AI elements – adapts exercise scenarios to a participant’s reactions, making the process more precise and predictable than classic imagination techniques. Another intriguing path is “digital therapeutics” – certified, often reimbursed therapeutic programs in app or online platform form, which undergo formal clinical trial processes like drugs. In mental health, these include CBT modules for depression and anxiety, addiction relapse prevention, and family psychoeducation tools. More and more, digital therapeutics are integrated with AI modules, personalizing the program flow and content. The next step is multimodal systems, combining analysis of text, speech, facial expressions, and physiological parameters (such as heart rate, HRV) to build a fuller picture of the patient’s emotional state – such solutions may potentially help recognize suicidal or psychotic crises early. Simultaneously, explainable AI models are developing, which can explain to the therapist on which features and data a given recommendation is based. This is essential to avoid “black boxes” in such a sensitive field as mental health and to enable professionals to critically assess automatic suggestions. Behind these innovations is a trend toward building secure, encrypted data ecosystems where patients’ mental health information is processed locally on the device or in tightly regulated medical servers. As AI tools proliferate, “privacy by design” – engineering technologies to protect confidentiality, minimize data range, and ensure patient transparency from the very start – is increasingly important. None of this eliminates the need for human contact, but it does change how psychotherapy is delivered: some interventions move into the digital space, sessions become more focused, and therapists have richer, more organized information at hand to make better clinical decisions and foster more effective recovery processes.

The Future of AI and Classical Therapy

The future of AI in relation to classical therapy is increasingly described not in terms of “replacement,” but rather “collaboration” and a restructuring of the entire mental healthcare system. The most likely scenario envisions hybrid treatment models, where the first line of support consists of smart apps and chatbots, and human-therapist contact marks the stage of deep, specialist work. AI may act as a “filter” – initially assessing symptom severity, suggesting urgency, and directing patients to appropriate forms of help: from psychoeducation and digital self-help to intensive inpatient therapy. This model fits public health systems with specialist shortages and long waiting times well – AI can fill the “time gap” by providing structured support between reaching out for help and the first visit. Simultaneously, technology for risk prediction (e.g., suicide) and mental state deterioration will influence the organization of crisis duty and interventions – the classical therapist will receive integrated alerts generated by systems analyzing app data, mood logs, wearables, or teletherapy records, which may significantly improve clinical decision accuracy, but also requires a new kind of responsibility for responding to signals. As AI becomes better at analyzing natural language, voice tone, or micro-expressions, therapists will have “a second pair of eyes” to diagnose emotional patterns, subtle behavioral shifts, and their correlations with therapy progress – but interpreting these data, making sense of them in the context of the patient’s life story, and determining next steps will remain a human task. In practice, this could transform the therapist’s role from a “detective” solving clinical riddles to a “conductor” orchestrating various digital tools and healthcare resources, who must critically judge algorithmic recommendations, recognize their limitations (e.g., regarding minorities or rare disorders), and integrate quantitative data with the qualitative experience of seeing the patient in person. Therapist training will change too – curricula will likely include basic AI model principles, understanding algorithm errors, training data biases, legal issues, and digital competencies such as choosing and critically appraising tools, working with patients using therapeutic apps, or incorporating their data into case conceptualization. This may cause concern in some quarters about the “technical” distortion of the profession, but also raise the prestige of specialists who successfully combine clinical knowledge with new technology insights.

The transformation of AI-classical therapy relations will also be shaped by legal regulations and social expectations, which will determine where acceptable automation ends and areas reserved solely for humans begin. We can expect standards to specify which clinical decisions AI may support and which must always be verified by a licensed therapist or psychiatrist – for example, in diagnosis, medication modifications, hospitalization decisions, or risk assessment. Professional psychotherapy organizations will likely establish guidelines for ethical AI tool use: informing patients about algorithm involvement in care, principles for storing and anonymizing data, consent for using data to further train models, and handling situations where AI recommendations conflict with the therapist’s clinical intuition. On a societal level, what matters most is how patients rate their “digital therapy” experience – will they trust AI systems as first contact, or see them as a backup tool for when specialist access is limited? Much depends on the quality of the user experience: transparency, clear language, avoiding false promises (“I’m your therapist”), and the ease of switching from AI to human support in a crisis. It’s also possible that in the future, there will be new forms of “AI-assisted therapy,” where some tasks – e.g., cognitive exercises, automatic belief monitoring, VR exposure – are completed with system help, while sessions with a therapist concentrate on relational work, deeper meaning, emotion regulation, and integrating changes into daily life. This opens opportunities for tailored treatment paths based on preferences and capacities: those valuing anonymity and self-reliance may use intensive digital components, while those for whom being “seen” and “heard” by another is vital will have priority access to traditional therapy. Systemically, the future may resemble a multi-level ecosystem where AI serves as an intelligent supporting infrastructure and classical therapy remains the core of healing, requiring policymakers and practitioners to constantly balance efficiency, accessibility, safety, and the humanist dimension of therapeutic work.

Summary

Artificial intelligence is gaining increasing recognition in various fields, including psychotherapy. While AI can analyze data and provide recommendations, it’s not able to fully comprehend human emotions and the complexities of human nature. Therefore, experts agree that AI should serve as a tool supporting therapists rather than replacing them. The future may bring innovations improving therapy’s effectiveness, but without personal contact and empathy, AI will remain a support for traditional therapeutic methods.